“Can you go over Sensitivity-Specificity, and what that actually means for us in the operating room?”

Sensitivity-Specificity

I think the second part of that question is the most important (what’s that mean for us in the OR). If you’re taking the DABNM boards, of course, you’re going to need to know the definition. But being able to define sensitivity-specificity is far less important than being able to apply it.

But you have to start somewhere, so let’s start with some definitions so we can use them as building blocks to understanding.

Definitions:

Sensitivity: the proportion of patients with the disease who test positive.

Specificity: the proportion of patients without disease who test negative.

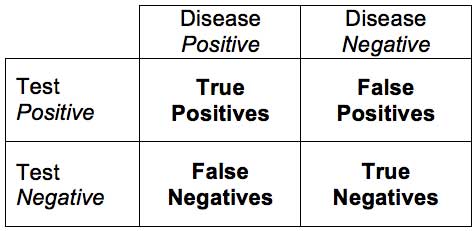

True Positive: correctly identified, or sick people correctly identified as sick.

True Negative: correctly rejected, or healthy people correctly identified as healthy.

False Positive: incorrectly identified, or healthy people incorrectly identified as sick.

False Negative: incorrectly rejected, or sick people incorrectly identified as healthy.

“Now we can start to add these concepts together to figure out what it means for our monitoring, and what the percentages mean that we see in the papers we read.”

Sensitivity

Sensitivity deals with one of our biggest “uh oh” moments faced in neuromonitoring. The cases that lower your overall monitoring sensitivity are the problems where the OR staff gets involved and they discuss dropping your contract.

Here’s what it looks like: you record the case and see no changes. But the patient wakes with a problem when you thought there wouldn’t be one (false negative).

Now you have to prove that it was a shortcoming of the modality (poor sensitivity or poor understanding of what is being monitored) and not your ability to monitor/interpret the results.

The reason not detecting a problem when a problem occurs lowers your sensitivity is because of the following equation:

Sensitivity = (# of true positives) / (# of true positives + # of false negatives)

Or

Sensitivity = (# of people with surgical injury detected by monitoring) / (# of people with surgical injury detected by monitoring + # of people with surgical injury that had no significant changes in monitoring)

Or

Sensitivity = probability of an injury given there was a significant change

So we would like to see our outcome to be 1, or 100%. If there is a deficit not picked up on monitoring, then our denominator is larger than our numerator, bringing our percentage down.

For example, 11 patients wake up with a deficit, but monitoring only picked up 10.

Sensitivity = (10) / (10 + 1)

= 0.91, or 91%

You have to be careful when reading papers where they are assessing the modality used for the correct purpose. For instance, SSEP monitors the dorsal column or sensory track. Is it fair to say that they had a false negative if there was damage to the anterior horn cells?

Specificity

Specificity is what we get challenged on all the time. It’s what sets off panic mode. You say “Doc, I have a change in SSEP!” S/he says, “Are you sure it isn’t technical, I didn’t do anything?” What s/he is saying is that you do not have a specific modality, or that your positive result (the change) lacks the means to identify patient injury. S/he is praying for a false positive, because that would mean the patient is OK even though you are saying there is a problem. But the surgeon doesn’t want this to be a reoccurring situation either because now s/he can’t trust you. A high amount of false positives means a poor specificity (which means, “Why the hell am I using you again? If this keeps up we need to discuss the problem…”), because of the equation used to find specificity:

But the surgeon doesn’t want this to be a reoccurring situation either because now s/he can’t trust you. A high amount of false positives means a poor specificity (which means, “Why the hell am I using you again? If this keeps up we need to discuss the problem…”), because of the equation used to find specificity:

Specificity = (# of true negatives) / (# of true negatives + # of false positives)

Or

Specificity = (# of people with no surgical injuries and no significant monitoring changes) / (# of people with no surgical injuries and no significant monitoring changes + # of people with no surgical injury but had significant monitoring changes)

Or

Specificity = probability of no injury given there were no significant monitoring findings

So if someone new to the field is calling EMG for every burst they see, they have become a false positive machine. If they did 10 cases and made clinically significant calls in 5 of those cases, and all 10 patients woke up OK, then they have a poor specificity.

Specificity = (5) / (5+5)

=0.5, or 50%

Application of Sensitivity-Specificity numbers to tcMEP

For our purposes, having a lower specificity is usually more desirable than having a lower sensitivity. And you can’t have it both ways. As criteria change to improve one, the other will suffer.

That’s why you’ll see some monitoring groups move away from the all-or-none criteria suggested for tcMEP. When you understand what all is going into the recording of a muscle potential after stimulating the cortex through the cranium, a 100% reduction makes the most sense (and you can make an argument that the way amplitudes are being measured by most groups lacks accuracy). The specificity goes way up. But since there have been reports of post op deficits with some CMAP response present, there is a reduction in the sensitivity.

Some groups have adopted a 75-80% reduction criteria in order to even further minimize any loss of sensitivity, even if the drop in specificity is far greater.

Should a group chose to really cover their basis against false negatives and lower sensitivity, they might choose a 50% reduction in tcMEP as a significant change. From my experience, they would have a sensitivity of 100%, but their specificity would be unacceptable (I’m talking about tcMEP for the spinal cord, not brainstem or peripheral nerve monitoring here).

So this is one of the reasons that there is no agreed upon alarm criteria for a lot of what we do, even if there are guidelines set by our associations.

One last observation on sensitivity-specificity.

For the neuromonitoring tech in the room, we can find ourselves in a tough situation. A lot of our surgeons only want to be told about something if it is a problem. Giving far too many false alarms is an easy way to get kicked out of their room.

For the oversight neuromonitoring doctor, we can find ourselves in a tough situation. We are there to make sure that the surgeon is informed of possible deficits. And because some causes are time dependent, the sooner the surgeon is informed and can make corrective measures the better.

I’ve been on both sides of the monitor, as the clinician in the room, remote doc overseeing the case and doing cases in the operating room without any other oversight.

There are definitely different emotional factors at play.

In the operating room, it is easier to lean towards making sure specificity is not forgotten about. You don’t want any false negatives, but you’re not looking to jump the gun either and become a false positive machine.

Overseeing someone else running the case is a little nerve wracking. There is a loss of control, and the talent level on the other side of the monitor can vary greatly. Human instinct makes you lean more towards making sure all changes are reported and sensitivity is as high as possible. Specificity sometimes takes a back seat.

Remember, I am talking about human emotions here.

My observation: not many remote docs work in the OR (and some never) and not too many clinicians working in the OR also do oversight (usually an in-house program or the DABNM falls into this role, and there is only about 150 active DABNMs).

My question:

Want new articles before they get published?

Subscribe to our Awesome Newsletter.

Keep Learning

Here are some related guides and posts that you might enjoy next.

How To Have Deep Dive Neuromonitoring Conversations That Pays Off…

How To Have A Neuromonitoring Discussion One of the reasons for starting this website was to make sure I was part of the neuromonitoring conversation. It was a decision I made early in my career... and I'm glad I did. Hearing the different perspectives and experiences...

Intraoperative EMG: Referential or Bipolar?

Recording Electrodes For EMG in the Operating Room: Referential or Bipolar? If your IONM manager walked into the OR in the middle of your case, took a look at your intraoperative EMG traces and started questioning your setup, could you defend yourself? I try to do...

BAER During MVD Surgery: A New Protocol?

BAER (Brainstem Auditory Evoked Potentials) During Microvascular Decompression Surgery You might remember when I was complaining about using ABR in the operating room and how to adjust the click polarity to help obtain a more reliable BAER. But my first gripe, having...

Bye-Bye Neuromonitoring Forum

Goodbye To The Neuromonitoring Forum One area of the website that I thought had the most potential to be an asset for the IONM community was the neuromonitoring forum. But it has been several months now and it is still a complete ghost town. I'm honestly not too...

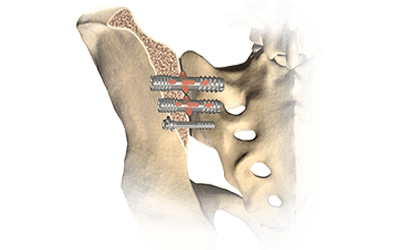

EMG Nerve Monitoring During Minimally Invasive Fusion of the Sacroiliac Joint

Minimally Invasive Fusion of the Sacroiliac Joint Using EMG Nerve Monitoring EMG nerve monitoring in lumbar surgery makes up a large percentage of cases monitored every year. Using EMG nerve monitoring during SI joint fusions seems to be less utilized, even though the...

Physical Exam Scope Of Practice For The Surgical Neurophysiologist

SNP's Performing A Physical Exam: Who Should Do It And Who Shouldn't... Before any case is monitored, all pertinent patient history, signs, symptoms, physical exam findings and diagnostics should be gathered, documented and relayed to any oversight physician that may...

0 Comments